Hand & tool segmentation · brick counting

Two zero-shot primitives for the helmet-cam pipeline: a temporally-stable hand + tool tracker, and a persistent brick counter. No labels, no fine-tuning.

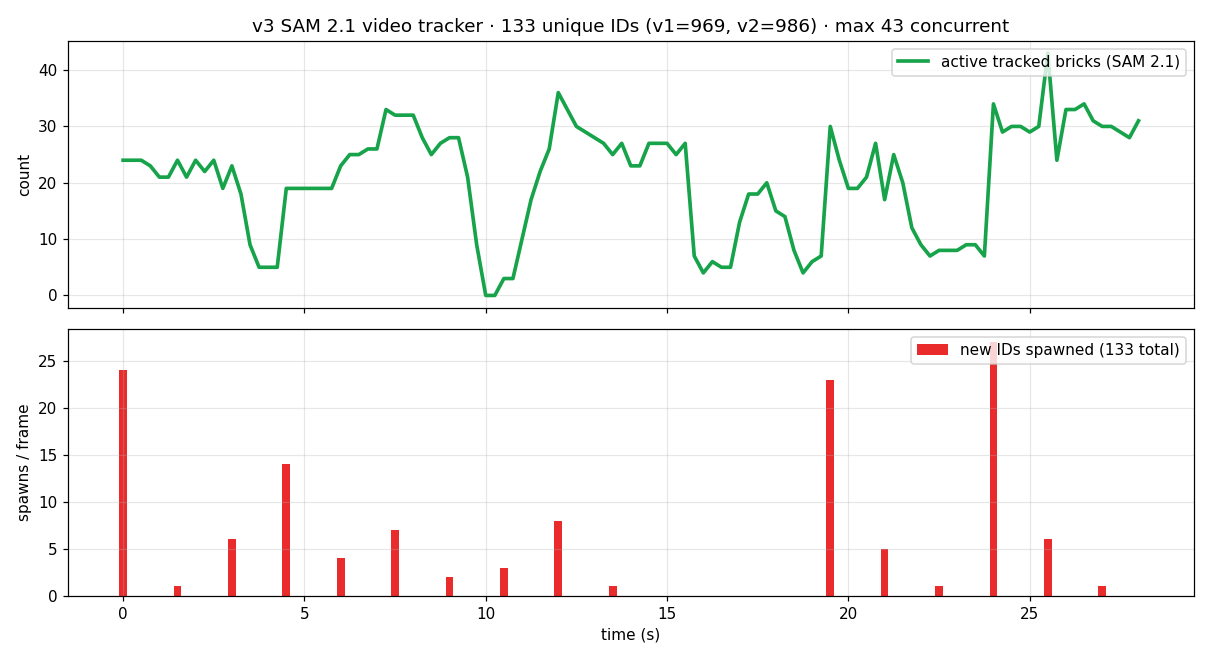

The problem. Per-frame open-vocabulary detection is noisy: hands disappear at 28% of frames, IDs swap on every camera turn, and naïve IoU tracking inflates a 30-second clip into 969 "unique" bricks. We need persistent identity, not per-frame snapshots.

Mechanism (shared by both primitives)

SAM 3.1 = sparse, high-quality detection (text-prompted, 30 ms/prompt). SAM 2.1 video = per-object attention memory that propagates a registered mask through subsequent frames, robust to short occlusion. Combine them: detect rarely, propagate densely, re-detect to discover new objects.

Why this beats per-frame detection

A per-frame text prompt has no notion of object identity — frame N's "hand" and frame N+1's "hand" are independent detections. SAM 2.1's memory carries each mask's tokens forward, so identity is a property of the track, not of any single inference call.

Why this beats appearance re-ID

DINOv2 cosine similarity collapses on visually-similar objects (every gray brick looks like every other gray brick). The video memory matches by spatiotemporal token continuity, which doesn't require objects to be visually distinct.

per-frame ablation viewer

Step through 50 test frames; switch the result panel between approaches to see what each one detects on the same input. Use ← / → to navigate, click thumbnails to jump.

Original frame

Result

hand

PPE (hat / vest)

brick / concrete block

tool (trowel / bucket / etc.)

video tracking + counting experiments

★ Hand + tool tracker

- Pick anchor frame. Choose a frame with confident hand-near-bucket visibility (here f22 of a 6-s clip).

- SAM 3.1 detect once. Prompts:

hand,gloved hand,concrete block,bucket. Filter by score & area to 13 clean masks. - Register as SAM 2.1 anchors. Each mask becomes an

obj_idin a video session viaadd_new_mask. - Bidirectional propagation. Forward + backward from the anchor; SAM 2.1's memory tokens carry each object through neighbouring frames.

What survives

Camera pan, hand-occlusion of bricks, brief frame-edge clipping. Mask quality stays tight on the hand close-up throughout.

What breaks it

Long out-of-frame periods (> ~2 s) drift the memory; the object reappears as a hallucinated thin mask. The brick counter solves this by periodically re-anchoring (see below).

Failure modes seen

When two hand instances cross, IDs occasionally swap. Adding a small

multimask_output margin to the anchor would help — not done here.★ Persistent brick counter

- Frame 0: SAM 3.1 with 3 prompts (

concrete block,brick,cinder block); dedup masks across prompts at IoU>0.55. 24 anchors registered. - Propagate to next spawn frame (every 6 frames = 1.5 s @ 4 fps). All existing tracks update via SAM 2.1 memory.

- Re-detect with SAM 3.1. For each new candidate mask, check IoU against every active track. If >0.25 → reject (already tracked). If <0.25 → register as a new

obj_id. - Repeat until end of clip. 18 spawn events; only 11 actually fire (the others matched existing tracks). 133 unique IDs total, max 43 concurrent.

Persistence test

At 15-18 s the helmet looks away — 0 raw detections. The 70 in-flight tracks are preserved by memory; when the camera returns at f78, only the 23 genuinely new bricks (different stack) get fresh IDs.

Why prior versions failed

v1 used IoU between consecutive raw detections — every camera shake fragments IDs (969 unique). v2 added DINOv2 cosine re-ID — only 32 of ~950 possible re-acquisitions hit (986 unique) because gray bricks have near-identical embeddings.

Honest upper bound

133 is still a ceiling, not the true count. Some "bricks" are mortar buckets or worker silhouettes mis-detected by SAM 3.1. Two cleanups would tighten this: reject anchors that overlap detected hands; expire tracks whose mask shrinks < 200 px for > 8 frames.

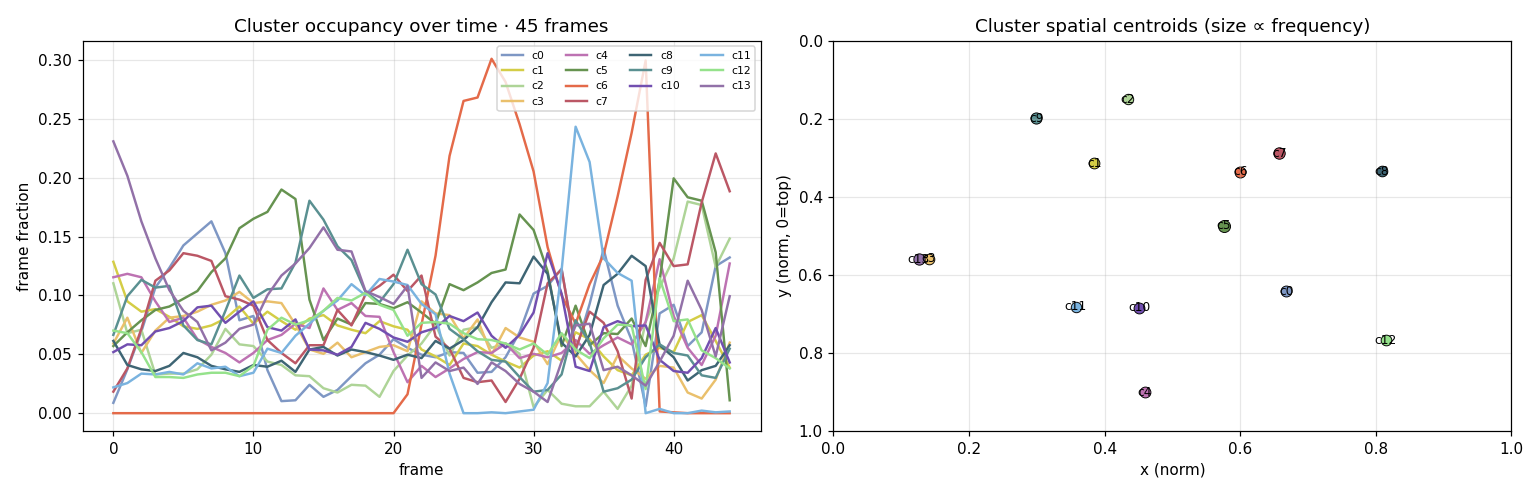

DINOv2 patch clustering · fully-unsupervised baseline

- Per-frame patch features. DINOv2-L on 518×518 input → 37×37 grid of 1024-D tokens, no detection head, no labels.

- Pool across the clip. 45 frames × 1369 patches = 61,605 tokens; L2-normalize.

- K-means K=14. MiniBatch, 3.4 s on the pooled matrix.

- Project labels back to pixels. Each patch grid is upsampled nearest-neighbour to original resolution and tinted by cluster ID.

What it shows. Worker vests, sky, blocks, scaffolding, ground each get a distinct cluster ID — semantic structure emerges with zero supervision. What it doesn't. Hands are sub-patch (≤14 px) so they merge with skin/arm clusters. Useful as a scene-decomposition prior for higher-level tasks, not as a fine-grained hand mask.

What we tried that didn't work — for context

| approach | result | why it failed here |

|---|---|---|

| MediaPipe HandLandmarker | 2 / 50 frames | Trained on web-photo hands; collapses on gloves, fisheye distortion, motion blur, and heavy occlusion typical of helmet-cam masonry footage. |

| OWLv2 large → SAM 2.1 box prompt | noisy, runaway masks | OWLv2 fires many low-confidence boxes; SAM 2.1's box prompts then segment the dominant object inside the box, which is often the worker's whole arm rather than the hand. |

| SAM 3.1 multiplex with text prompt (per-frame) | 7-10 fps · jitters | One semantic prompt per session — switching concept resets state, so multi-class tracking needs N parallel sessions. Acceptable if only one concept is needed; otherwise use the mask-anchor pattern. |

| IoU-only brick tracker (v1) | 969 unique IDs / 30 s | Camera shake drops adjacent-frame IoU below the 0.30 threshold; every shake spawns "new" bricks. Solved by SAM 2.1 memory. |

| DINOv2 cosine re-ID (v2) | 986 IDs · 32 re-acqs | Bricks are visually near-identical (gray rectangles), so cosine similarity between two different bricks is comparable to the same brick from two angles. Embedding space lacks discrimination. |

Take-aways

- Sparse detect + dense propagate. One reliable mask is worth a hundred shaky per-frame detections. SAM 3.1 picks the moments; SAM 2.1 holds the line.

- Identity is structural, not visual. When objects are visually similar (bricks, gloves), appearance re-ID fails. Token-continuity tracking succeeds because it doesn't need objects to look distinct.

- Re-spawn on a fixed cadence. Every K frames, run the detector again and reject candidates that overlap existing tracks. New objects get IDs; old ones don't. This is how the brick counter goes from 969 → 133 unique.

- The right anchor matters. Spend the SAM 3.1 budget on choosing a frame where the target is unambiguous (close-up, well-lit). One bad anchor can poison every downstream propagated frame.

- Knowing what doesn't work is half the answer. MediaPipe, OWLv2-direct-box, per-frame text prompts, and DINOv2-re-ID each break in a specific way that points to the correct primitive: memory-based tracking.